书生大模型实战营-L1-8G显存玩转书生大模型Demo

本节任务要点

基础任务(完成此任务即完成闯关)

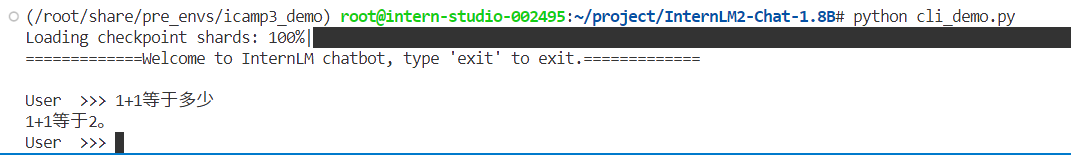

- 使用 Cli Demo 完成 InternLM2-Chat-1.8B 模型的部署,并生成 300 字小故事,记录复现过程并截图。

进阶任务(闯关不要求完成此任务)

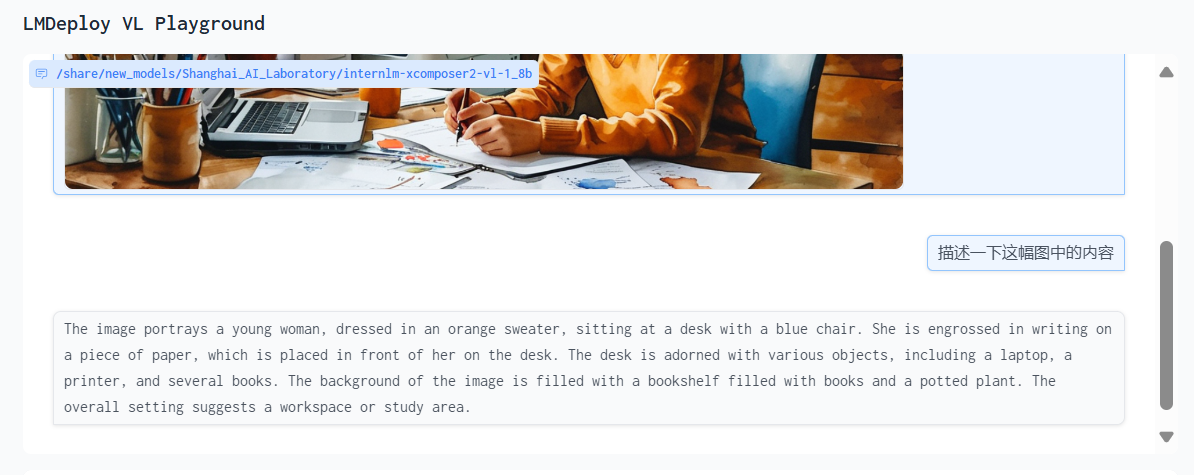

- 使用 LMDeploy 完成 InternLM-XComposer2-VL-1.8B 的部署,并完成一次图文理解对话,记录复现过程并截图。

- 使用 LMDeploy 完成 InternVL2-2B 的部署,并完成一次图文理解对话,记录复现过程并截图。

实践流程

激活环境

conda activate /root/share/pre_envs/icamp3_demo命令行部署 InternLM2-Chat-1.8B

创建 cli_demo.py

import torchfrom transformers import AutoTokenizer, AutoModelForCausalLM

model_name_or_path = "/root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-1_8b"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True, device_map='cuda:0')model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='cuda:0')model = model.eval()

system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语).- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless.- InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文."""

messages = [(system_prompt, '')]

print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============")

while True: input_text = input("\nUser >>> ") input_text = input_text.replace(' ', '') if input_text == "exit": break

length = 0 for response, _ in model.stream_chat(tokenizer, input_text, messages): if response is not None: print(response[length:], flush=True, end="") length = len(response)执行python cli_demo.py

Streamlit Web Demo 部署 InternLM2-Chat-1.8B

运行

cd /root/project/Tutorial/toolsstreamlit run streamlit_demo.py --server.address 127.0.0.1 --server.port 6006生成小故事

LMDeploy 部署 InternLM-XComposer2-VL-1.8B 模型

conda activate /root/share/pre_envs/icamp3_demolmdeploy serve gradio /share/new_models/Shanghai_AI_Laboratory/internlm-xcomposer2-vl-1_8b --cache-max-entry-count 0.1

LMDeploy 部署 InternVL2-2B 模型

conda activate /root/share/pre_envs/icamp3_demolmdeploy serve gradio /share/new_models/OpenGVLab/InternVL2-2B --cache-max-entry-count 0.1

总结

我们ai真是太厉害了,多模态未来可期!

Comments